Robots as rockstars: how artificial intelligence is the future of making music

We are Borg. Resistance is futile.

Last week, the researchers at OpenAI released Jukebox, a machine learning model that generates music as raw audio—including vocals—in a variety of genres. Below is a jazz song generated by their model, modeled in the style of Frank Sinatra and Ella Fitzgerald. You can find more model-generated songs through their model exploration tool.

OpenAI’s Jukebox is just a demonstration of their general ability to train neural networks—I doubt they—as a research lab—are likely to commercialize this technology. But AI-generated music represents the future of the industry. Not too long from now, neural networks will be involved in every step of music production, from lyric writing to mastering. In fact, there is already a long history of AI in music-making.

Major developments in the use of AI for music creation

1821 — Dietrich Nikolaus Winkel’s Componium

Long before computing or artificial intelligence truly existed, Winkel—also known as the inventor of the metronome—created the Componium: a pipe organ that generated pseudorandom compositions using a system of two rotating barrels.

Depicted: Winkel’s Componium. Photo credit: Wikimedia user Jbumw.

1957 — Illiac Suite

The University of Illinois Urbana-Champaign professors Lejaren Hiller and Leonard Issacson programmed the ILLIAC I computer to generate the Illiac Suite.

Depicted: A video of the beginning of a performance of the Illiac Suite.

1965 — Ray Kurzweil appearing on I’ve Got A Secret

The AI futurist Ray Kurzweil—then only seventeen-years-old—appeared on the television game show I’ve Got A Secret to play a piano piece that had been composed by a computer program that he had written.

Depicted: The I’ve Got A Secret segment depicting teenaged Ray Kurzweil and his computer-composed piano piece.

1981-Present — EMI and Emily Howell by David Cope

The researcher David Cope created the computer program EMI over many years. EMI stands for “Experiments in Musical Intelligence” and is capable of producing works that mimic the style of composers like Bach. Subsequently, he created Emily Howell, a computer program that is a successor to EMI. Both computer programs have released music on the classical music record label Centaur Records.

Depicted: A composition produced by Emily Howell.

Oct 2010 — Iamus’s “Opus One”

The University of Málaga in Spain hosts the Melomics research project. The project centers around music composition, generating its research using two computer clusters—Melomics109 and Iamus. In October 2010, Iamus composed “Opus one”, which is a fragment of classical music designed to be original as opposed to being a mimicry of an existing composer’s style. Iamus later composed Hello World!—its first full composition.

Depicted: A performance of Iamus’s Hello World!

Sep 2016 — “Daddy’s Car” by Sony Computer Science Laboratories (CSL)

In September 2016, Sony CSL released “Daddy's Car” as a The Beatles-style AI-generated single. This was the lead single for Hello World*, an AI-generated album released by Sony CSL in collaboration with various artists using their toolkit called Flow Machines.

*Not to be confused with the composition from Iamus

Oct 2016 — Alex Da Kid releases “Not Easy” with IBM Watson

The rapper Alex Da Kid released his debut single “Not Easy” featuring X Ambassadors, Elle King, and Wiz Khalifa. In addition to these superstar artists, Alex worked with IBM Watson BEAT, a technology designed to help guide the songwriting and music production process. IBM later publicly released the code for Watson BEAT on GitHub. Then-IBM employee Anna Chaney also wrote a blog post about using Watson BEAT to produce music.

Aug 2017 — “Break Free” by Taryn Southern

“Break Free” was the first single on Taryn Southern’s album I AM AI. She developed her album using the toolkit developed by the company Amper Music. She spoke about her AI-driven composition process with Lizzie Plaugic and Dan Schawbel.

Depicted: The official music video for Taryn Southern’s “Break Free.”

Nov 2017 — Coditany of Timeness by Dadabots

Dadabots is simultaneously a death metal band and a machine learning research group. In November 2017, they released their album Coditany of Timeness as part of a submission to the AI conference NeurIPS. Dadabots spoke with The Outline about the production process for Coditany of Timeness. They later spoke with Vice in 2019 about their never-ending AI-generated death metal YouTube channel.

Depicted: The album Coditany of Timeness.

May 2019 — Holly Herndon’s AI-powered album, PROTO

Herndon and her partner Mathew Dryhurst created an AI singer named Spawn to help produce their album PROTO. Herndon described her music production process in more detail with Noisey, The Fader, ARTnews, and The Guardian. Vulture and The New Yorker reviewed PROTO in great detail. She also recently completed a Ph.D. from Stanford’s Center for Computer Research in Music and Acoustics.

Depicted: “Birth,” which is Holly Herndon’s first track from PROTO. The vocals are “sung” by by Herdon’s AI model named Spawn.

Aug 2019 — Chain Tripping by YACHT

In August, the band YACHT released their album Chain Tripping. They used various AI models to help compose songs, write lyrics, and create artwork. Chain Tripping was nominated for a Grammy for Best Immersive Audio Album. YACHT member Claire Evans gave a Google I/O talk about producing Chain Tripping using Google’s TensorFlow Magenta AI music tool.

Depicted: The single “Downtown Dancing” from YACHT’s album Chain Tripping.

Oct 2019 — Arca and Bronze AI

The musician Arca composed a soundtrack using an AI toolkit created by the company Bronze AI, which was then played in the lobby of New York City’s Museum of Modern Art (MoMA) starting in October 2019. (The MoMA is currently temporarily closed due to the COVID-19 crisis.)

May 2020 — AiMi.fm

Around May 2020, AiMi.fm launched a new mobile app that generates electronic music based on a few bars that have been composed by human artists.

The future of generative music

The merger of generative music with hit song science

“Hit song science” is a term trademarked by Mike McCready’s Polyphonic HMI, referring to the application of data science to whether or not a song will become a chart-topping hit.

So far, generative music models are focused on producing music that sounds like a human would make it, but typically the outputs are not yet specifically conditioned to try and be commercially-successful. IBM Watson BEAT is one of the exceptions: they claim to have analyzed the structure of 26,000 Billboard Hot 100 songs as well as data from social media websites. There are various efforts to apply AI to record label A&R, such as Sodatone (acquired by Warner Music Group), Instrumental, or the more-recently-launched Snafu Records. For any of these players, the likely next step is mixing generative music with their A&R capabilities.

The merger of generative music with virtual musicians

The Internet has birthed various virtual musicians like Gorillaz, Hatsune Miku, and Lil Miquela. Behind these musicians are (presumably) human songwriters, but this doesn’t have to be the case in the future. Recently, Lil Miquela signed with the talent agency CAA.

Depicted: Lil Miquela’s single “Machine” featuring Teyana Taylor

Fans are perhaps more invested in the personality of a musician than the music itself, but this doesn’t have to prevent a future where the appearance, personality, and music are all simultaneously AI-generated.

The merger of generative music with “deepfakes”

Deepfakes are a colloquial name for a similar phenomenon—typically where a model is used to replace one person’s face or voice with another. Deepfakes have been used to produce satire, mimicked political speeches, and (mostly) fake celebrity sex tapes.

Depicted: An NBC News segment demonstrates how deepfake videos can be used to creat fake videos of celebrities and explores potential ways to detect deepfakes.

A YouTube channel called Vocal Synthesis has started uploading numerous audio deepfakes of celebrities reciting famous texts. For example, below is a deepfake of Jay-Z reciting the “Navy SEALs copypasta” (a joke from Reddit). Again, this is not a real recording of Jay-Z and his firm Roc Nation LLC has filed copyright complaints with YouTube complaining that these videos are impersonations of Jay-Z. Blogger and former Kickstarter CTO Andy Baio interviewed the creator of Vocal Synthesis, exploring the legal and ethical issues at hand.

Depicted: A deepfake of Jay-Z reciting the Navy SEALs copypasta.

The obvious next step for this technology is to produce original composition and songwriting with the delivery of famous artists. The marketing agency space150 has already done it last February, producing an AI-generated music video in the style of Travis Scott.

Depicted: The song “Jack Park Canny Dope Man” (lyrics) by TravisBott, the AI trained by the ad agency space150.

Mostly-unanswered questions around AI-generated music

Who owns the copyrights to purely AI-generated music?

There is limited case law on the topic, and the governments of the world have yet to reach a fully-consistent conclusion on how works purely created via artificial intelligence can be copyrighted. Todd Carpenter, Gerald Spindler, Andres Guadamuz, Shlomit Yanisky-Ravid, and Edward Klaris and Alexia Bedat have written interesting pieces regarding copyright law and artificially intelligent IP generation.

Are artists going to accept AI-generated music?

Last November, the musician Grimes (who now goes by c) was interviewed on Caltech physicist Sean Carroll’s podcast. Grimes talked about how in the future it might be difficult for human artists to compete against high-quality AI-generated artwork. The musicians Zola Jesus and Devon Welsh flamed Grimes’ comments, referring to her take as “silicon fascist.”

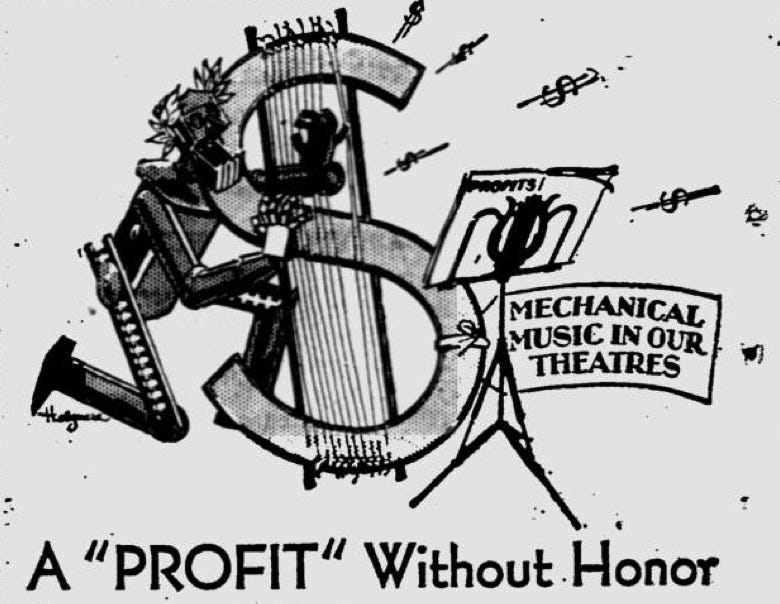

It’s hard not to see Zola Jesus and Devon Welsh’s takes as being potentially popular among other artists. Throughout history, musicians have resisted technology when they felt it could be a risk to their livelihoods. In 1906, the conductor John Philip Sousa wrote an op-ed titled “The Menace of Mechanical Music” in an effort to oppose the adoption of recorded music. In the early 1980s, the Musicians’ Union in the UK tried to fight the adoption of the synthesizer because they feared for the employment of musicians that performed string instruments. Or, perhaps most visually was in the 1930s when the American Federation of Musicians ran attack ads against the concept of recorded music in theaters. Musicians have often opposed technology—and technology has never cared.

Depicted: Advertisements run by the American Federation of Musicians during the 1930s, criticizing the developing practice of replacing live musicians in theaters with recorded music.

How can artists use AI to make music?

Google’s TensorFlow Magenta Studio offers a standalone application for Mac and Windows, as well as an Ableton Live plugin. This might be the cheapest and easiest way currently for artists to get started with production-grade machine learning models.

Additionally, AIVA, Alysia, Amadeus Code, Amper Music, Boomy, Bronze AI, Endel, Popgun, Melodrive, Mubert, and RunwayML are a handful of startups that expose some sort of AI model that assists in songwriting or generates or modifies music in some way.

Changelog

May 18th, 2020: Added a link to the startup Mubert as another way to make music with AI.

June 13th, 2020: Added AiMi.fm to the timeline.

January 11, 2021: Removed a broken YouTube video embed. Minor formatting changes.

February 13, 2022: Minor formatting changes. Removed unnecessary links.